Integrations: Integrate SUVA Agents with Large Language Models

Integrating Large Language Models (LLMs) with SUVA allows you to unleash the true potential of interactive chatbots. LLMs make chatbots more intelligent and context-aware and capable of handling customer queries with human-like proficiency.

Th LLM Integration process is divided into two parts: Integrations and Agent Configuration. This doc explains the Integrations part.

Integrations

Navigate to Large Language Model > Integrations. You will find two types of LLMs:

-

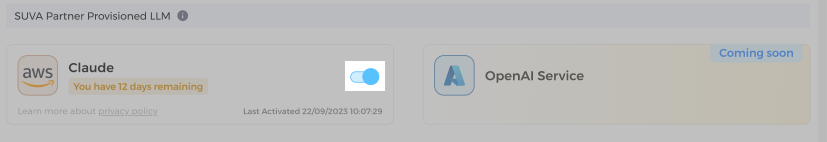

SUVA Partner Provisioned LLMs such as Claude and OpenAI Service.

-

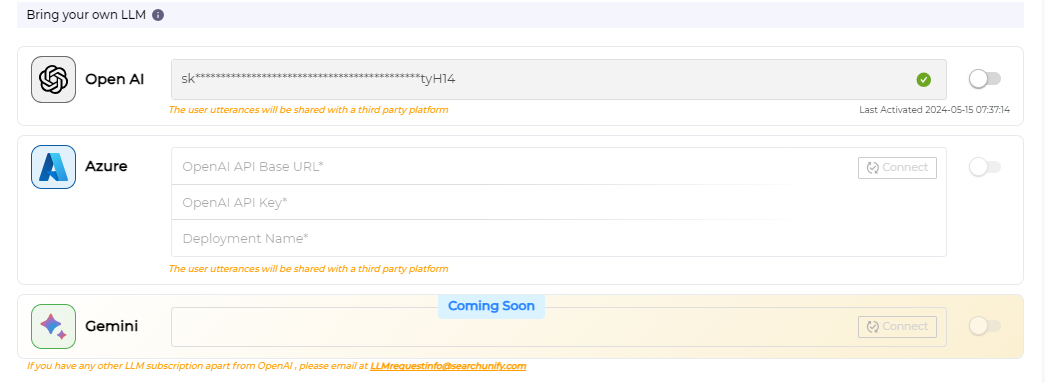

Bring your own LLM such as OpenAI, Azure, and Gemini.

SUVA Partner Provisoned LLMs, like Claude by AWS and OpenAI Service by Azure, ensure data privacy. Unlike some third-party LLMs, Claude and OpenAI Service neither store customer data nor use customer data to train their models.

SUVA virtul agents can be integrated with LLMs such as Claude, OpenAI, Azure, and Gemini currently.

Setting Up LLM Integration

-

From the SUVA admin panel, navigate to Large Language Model > Integrations.

-

Under the Integrations tab, you can see two set of LLMs:

2.1) SUVA Partner Provisioned LLM, including Claude and OpenAI Service.2.2) Bring your own LLM includes the popular third-party LLMs, such as OpenAI, Azure, and Gemini.

-

To use Claude by AWS, start a 14-day free trial. To continue with Claude after the trial period, reach out to your CSM or drop an email at llmrequestinfo@searchunify.com.

Note: Claude free trial is only available on the sandbox instance for testing.

-

Alternatively, use a third-party LLM by entering the required details. OpenAI, Azure, and Gemini are the third-party LLMs that are currently supported.

NOTE. To obtain the Authentications details to connect your SUVA agent with an LLM, please get in touch with your IT/DevOps team or the individual responsible for administering Large Language Models within your organization.

-

Once the connection is successfully established after entering the required details, toggle on to activate the either the OpenAI or the Azure integration.

Complete the integration process by finalizing the Agent Configuration.

Updating the Authentication Details

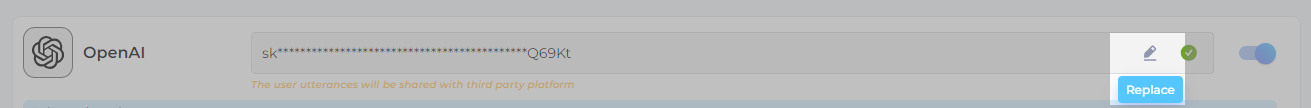

Use the edit icon to replace the authentication details. After confirming that you want to replace the key, the current key is removed.

Note. The current integration will be disabled if the authentication key changes. Reconnect with the new authentication key and Activate the integration. The selected Virtual Agent(s) and the temperature setting remains unaffected.

While changing keys, ensure that the new key is correct. If the authentication key is invalid and the configuration tab is closed or the settings with an invalid authentication key are saved, then the app continues to function based on the previous key.

Last updated: Thursday, September 25, 2025