Agent Configuration: Select Agent(s) and LLM Model, and Set Temperature Settings

After enabling the LLM, complete the process by configuring an agent or agents with activated LLM(s).

-

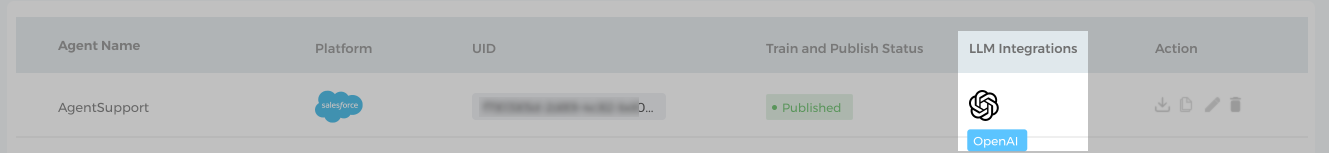

Switch to the Agent configuration tab. You will see a list of Virtual Agents added in your SUVA instance.

-

Expand an agent and you will see three options:

i) Select Model. Pick a suitable LLM. Three options are available in OpenAI: None, OpenAI - 4K context BYOL, or OpenAI - 16k context BYOL..

ii) Set Temperaturein the range 0-1.0. You can set the temperature to even values like 0.2, 0.4, etc. Although the recommended temperature is 0.2.

Note. 0.2 may not be the ideal temperature for your LLM integration. The ideal temperature depends on your use case. However, 0.2 is a goof place to start. You can then adjust the temperature up or down to get the results.

iii) OPTIONAL. Prompt is the text that you give to the LLM to start the response generation process. It should be clear and user-friendly.

NOTE. An activated LLM can be linked to more than one Virtual Agents.

-

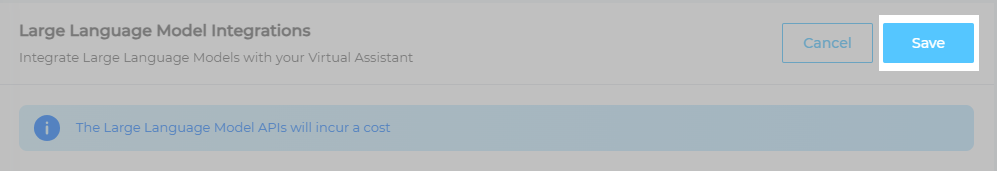

Save your settings. If you skip this step, then the integration will not work.

Once you have saved your settings, the process is complete. You can now see the LLM integration configured with a virtual agent.

The setup is done and the usage is covered in LLM Integration Usage.

How Temperature Setting Impacts Chatbot Responses

As an AI language model, LLMs can sometimes hallucinate and give inaccurate responses. Temperature Setting is there to help you minimize the hallucination. Temperature setting is a hyperparameter that can be used to control the randomness of responses. A higher temperature produces more unpredictable and creative results, while a lower temperature produces more common and conservative output.

The scale for setting the temperature is between 0 and 1.0; with 0 causing the chatbot to produce only conservative responses and 1.0 nudging the chatbot to come up with more human-like responses. If you adjust the temperature to 0.4, then the model generates more predictable text and less creative text than if you were to set the temperature to 1.0. The temperature scale can only be set to even values like 0.2, 0.4. 0.6. 0.8, and 1.0.

The best temperature depends on the desired output. Temperature, however, is one of the many factors that influence LLM output. The other factors include:

-

The prompt is the text that you give to the LLM to start the response generation process. The prompt can have a big impact on the output, so it is important to choose a prompt that is clear and concise.

-

The training data is the set of text that the LLM was trained on. The training data can affect the output in a number of ways, including the diversity of the output, the accuracy of the output, and the fluency of the output.

-

The model architecture is the design of the LLM. The model architecture can affect the output in a number of ways, including the speed of the generation process, the complexity of the output, and the accuracy of the output.

The recommend temperature is 0.2. Note. 0.2 isn't the ideal temperature for all LLM. The ideal temperature setting will vary depending on the specific task and the desired output. To find out the ideal temperature for your LLM, experiment a little.

Following are some of the example responses that we generated with different temperature setting scales:

Temperature Setting @ 0.2

Temperature Setting @ 1.0