How Temperature Setting Impacts Chatbot Responses

As an AI language model, LLMs can sometimes hallucinate and give inaccurate responses. Temperature Setting is there to curtail this situation. Temperature setting is a hyperparameter that can be used to control the randomness of responses. A higher temperature produces more unpredictable and creative results, while a lower temperature produces more common and conservative output.

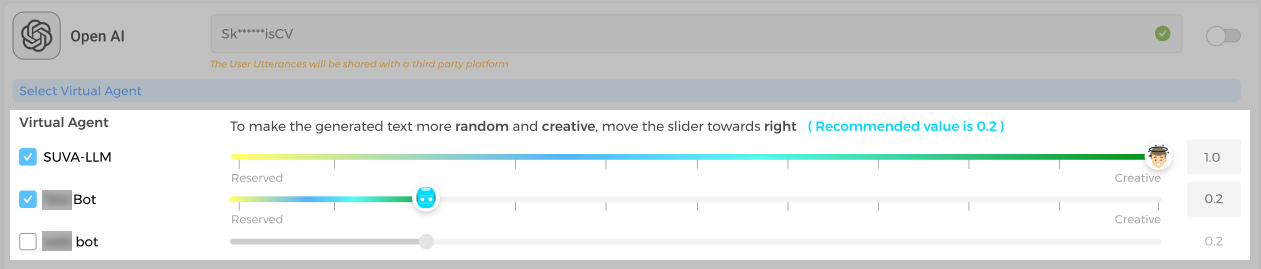

The scale for setting the temperature is between 0 and 1.0; with 0 causing the chatbot to produce only conservative responses and 1.0 nudging the chatbot to come up with more human-like responses. If you adjust the temperature to 0.5, then the model generates more predictable text and less creative text than if you were to set the temperature to 1.0.

The best temperature setting for a particular task depends on the desired output. For tasks that require creative or original text, a higher temperature may be preferable. For tasks that require factual or accurate text, a lower temperature may be preferable.

It is important to note that the temperature setting is just one of the many factors that can affect the output of an LLM. Other factors include the prompt, the training data, and the model architecture.

-

The prompt is the text that you give to the LLM to start the response generation process. The prompt can have a big impact on the output, so it is important to choose a prompt that is clear and concise.

-

The training data is the set of text that the LLM was trained on. The training data can affect the output in a number of ways, including the diversity of the output, the accuracy of the output, and the fluency of the output.

-

The model architecture is the design of the LLM. The model architecture can affect the output in a number of ways, including the speed of the generation process, the complexity of the output, and the accuracy of the output.

We, however, recommend 0.2 as the temperature setting to start with. 0.2 is not necessarily the ideal temperature setting for an LLM. The ideal temperature setting will vary depending on the specific task and the desired output. However, 0.2 can be a good starting point for many tasks.

If you are not sure what temperature setting to use, it is a good idea to experiment with different settings and see what works best for you.

Following are some of the example responses that we generated with different temperature setting scales:

Temperature Setting @ 0.2

Temperature Setting @ 1.0